Image Segmentation Evaluation Using Standard Metrics

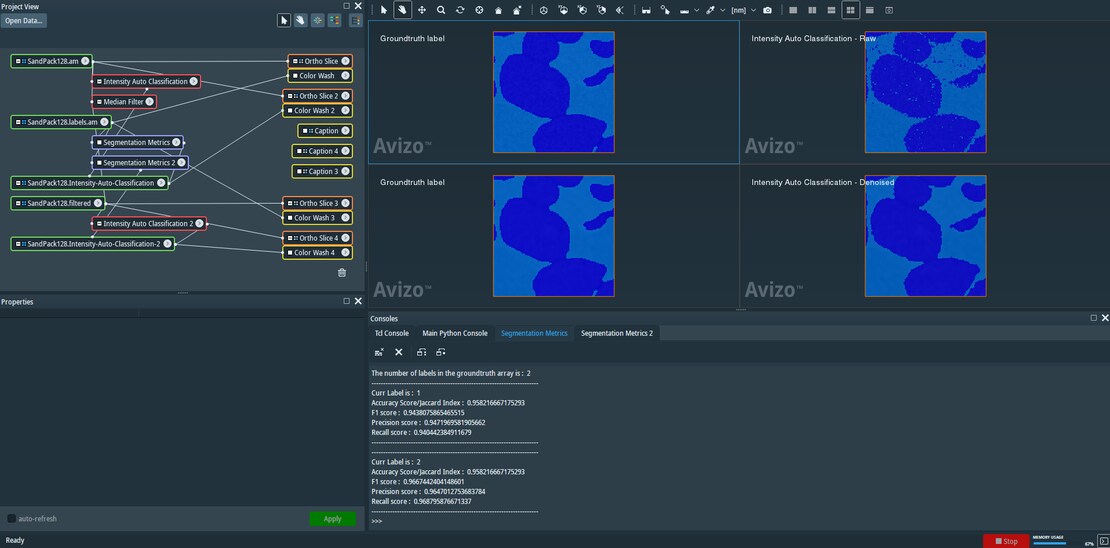

Segmentation evaluation using standard precision, and recall metrics for comparing two segmentation labels.

This Xtra implements common image segmentation metrics to record accuracy, F1, precision, and recall.

The Xtra shows up in the Xtra>Compute folder when right-clicking a label field.

The outputs are sent to the console. View the console by clicking the Show button on the Console port. Click Apply to compute the metrics.

Note that the metrics computed are binary for every material provided in the label field. Pairwise comparisons are made between materials with the same number between the Ground Truth and Comparison label fields. Therefore, be sure that the material IDs are equivalent in both data sets before interpreting the metrics.

The Python script accepts two inputs:

Groundtruth/Gold Standard : (as HxUniformLabelField3)

Prediction/Predicted labels : (as HxUniformLabelField3)

A standard sanity check of whether the two inputs are the same dimensions is performed; otherwise, the script returns with a text message.

The script also uses numpy’s unique function to pick out unique labels in the groundtruth array such that no specific ordering is required for the labels. For example, material IDs can be sequentially numbered (1, 2, 3, ...), or they can be any non-sequential integer (1, 10, 50, 60). This provides flexibility for comparing the label fields of choice without imposing a numbering or sequence-based restriction.

Each material ID is then compared individually and treated as a binary problem such that the ground truth image and the predicted image have boolean ‘True’ in the same location as the current label and ‘False’ everywhere else. All metrics are reported per material ID and for the entire image (2D or 3D).